Multi-armed bandit testing is an AI-powered optimization method that goes a step further than traditional testing. Instead of waiting for a test to end, it dynamically sends more traffic to the winning variations while the test is still running, learning and optimizing in real time to maximize conversions.

Beyond A/B Testing: The Rise of Multi-Armed Bandits

Think of it this way: you're in a casino with a row of slot machines, each a "one-armed bandit." Your goal is to walk away with the most money. A traditional A/B testing approach would have you pull each lever an equal number of times to find out which machine has the best average payout. The catch? You're knowingly wasting time and money on machines you're pretty sure are losers, just to collect enough data.

This is exactly where multi-armed bandit testing flips the script. It takes a much smarter approach. After just a few initial pulls on every machine, the system starts sending more of your plays to the one that’s currently paying out the most. It doesn't stop exploring the other options completely, but it heavily favors—or exploits—the most promising one.

The Business Case for Intelligent Optimization

For any business, this isn't just a clever analogy; it’s a direct route to faster ROI. Every visitor you send to a weak headline, a confusing call-to-action, or a poor product recommendation during a long A/B test is a lost opportunity. Multi-armed bandits are designed to minimize this "regret" by automatically shifting traffic away from underperformers.

Our deep expertise in the Sitecore DXP ecosystem allows us to deploy these advanced AI strategies to help businesses optimize their customer experiences dynamically. This approach is absolutely essential for high-traffic sites where waiting weeks for statistically significant A/B test results means leaving a lot of money on the table. With Sitecore AI and its related products, we can help you set up automated optimization that finds and capitalizes on winning content variations much faster.

The real power of multi-armed bandit testing is how it slashes opportunity cost. By shifting resources to the best-performing options in real time, it turns a simple test into a continuous, automated revenue-generating engine.

While both testing methods have their place, understanding their fundamental differences is key. For a foundational understanding, a traditional A/B test in Sitecore can be a great starting point.

To paint a clearer picture, here’s a direct comparison of the two approaches.

Multi-Armed Bandit vs. Traditional A/B Testing at a Glance

The table below breaks down the core differences between these two powerful optimization methods, highlighting how their distinct approaches affect everything from traffic management to business impact.

| Aspect | Traditional A/B Testing | Multi-Armed Bandit Testing |

|---|---|---|

| Traffic Allocation | Fixed and evenly split for the entire test duration. | Dynamic; automatically shifts more traffic to winning variations. |

| Primary Goal | Gather data to declare a statistically significant winner. | Maximize conversions and ROI during the test itself. |

| Speed to Value | Slower; requires a fixed sample size before acting. | Faster; begins optimizing and delivering value almost immediately. |

| Efficiency | Lower; half of the traffic is knowingly sent to an inferior option. | Higher; minimizes lost conversions by reducing traffic to losers. |

Ultimately, the choice depends on your goals. Traditional A/B testing is perfect for gathering clean data to make a confident, long-term decision. Multi-armed bandits, on the other hand, are built to maximize your returns right here, right now.

Why Bandit Algorithms Are So Much More Efficient

The real magic of multi-armed bandit testing is its sheer efficiency. Classic A/B tests force you to split traffic evenly for the entire test, but bandit algorithms are smarter. They’re built to cut down on “regret”—the conversions you lose by showing people an underperforming variation. This isn't just theory; it has a direct and measurable impact on your revenue.

Think about a traditional A/B test. You have two variations, and each gets 50% of your audience until the test is over. Even if one version is clearly failing early on, you're stuck sending half your traffic to a loser just to reach statistical confidence.

Slashing Opportunity Cost in Real Time

On high-traffic platforms like those built on Sitecore, that rigid approach is a huge problem. For every 10,000 visitors who see a weak headline in a fixed A/B test, you've permanently lost potential conversions. Multi-armed bandits stop this waste by adjusting traffic on the fly.

The process is simple but incredibly effective:

- Explore: The algorithm starts by sending a small amount of traffic to all variations, just enough to get a taste of how each one performs.

- Exploit: The moment one variation starts pulling ahead—getting more clicks or conversions—the algorithm automatically sends more traffic its way.

- Learn: This isn’t a one-time adjustment. It's a constant feedback loop where the algorithm keeps learning and shifting traffic, ensuring the best experience is always in front of the largest audience.

This "explore and exploit" model means you start making money from your best content almost right away, instead of waiting weeks for a formal test result.

By intelligently shifting traffic to winning variants, multi-armed bandit testing turns an optimization exercise into a continuous profit-maximization system. It stops the bleeding from underperforming options long before a traditional test would.

The Power of Getting to an Answer Faster

The speed of bandit testing is a complete game-changer. Research from Google has confirmed that multi-armed bandit testing is far more efficient than classical A/B experiments. In situations with low conversion rates, a standard A/B test might need over 4.5 million observations to find a winner. Bandits get there much faster because they ditch the poor performers early. You can dive into the full research on bandit efficiency from Google to see the stats for yourself.

For enterprise Digital Experience Platforms (DXPs), this speed is everything. On a Sitecore-powered e-commerce site or a busy SharePoint-based intranet, small delays in optimization add up to big losses in revenue or employee productivity. Tools like Sitecore AI build these bandit capabilities right in, letting marketing teams test and roll out winning content without the long waits of A/B testing. It keeps the digital experience running at its peak, contributing directly to your bottom line.

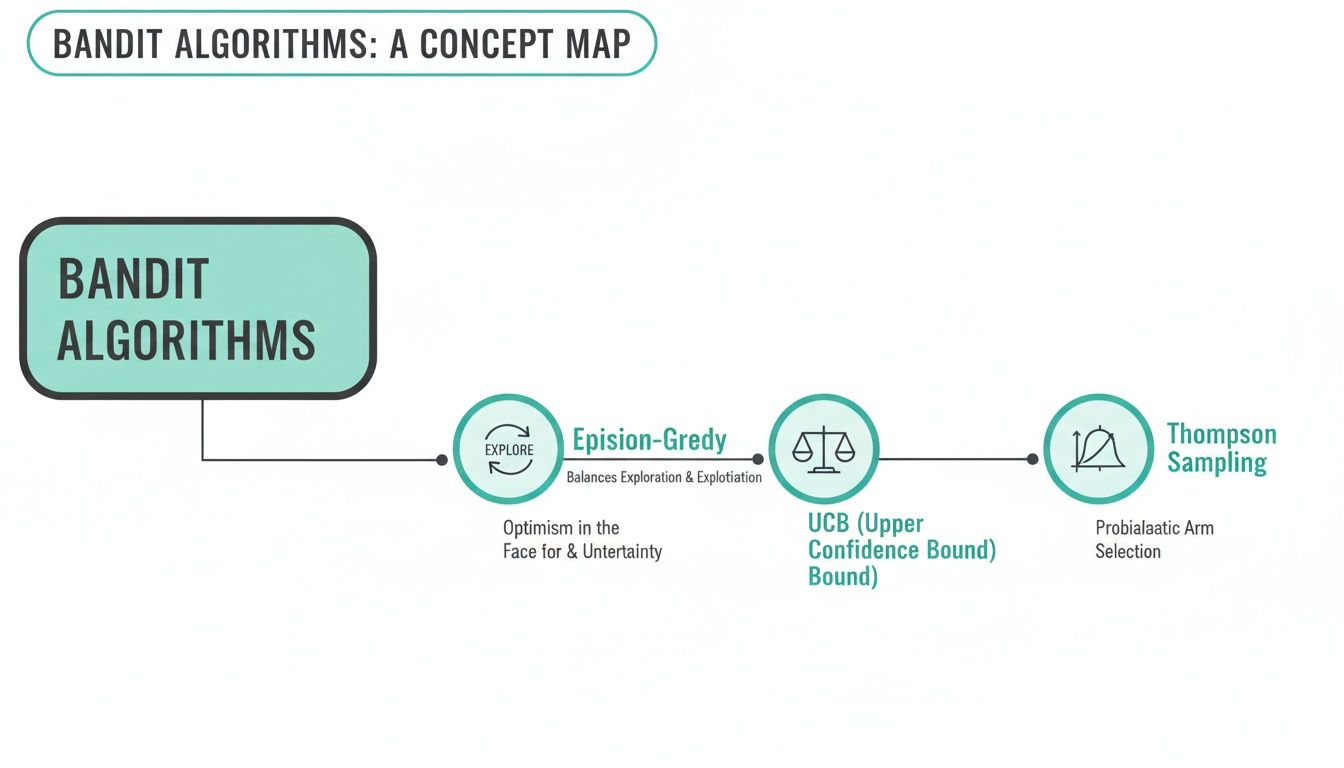

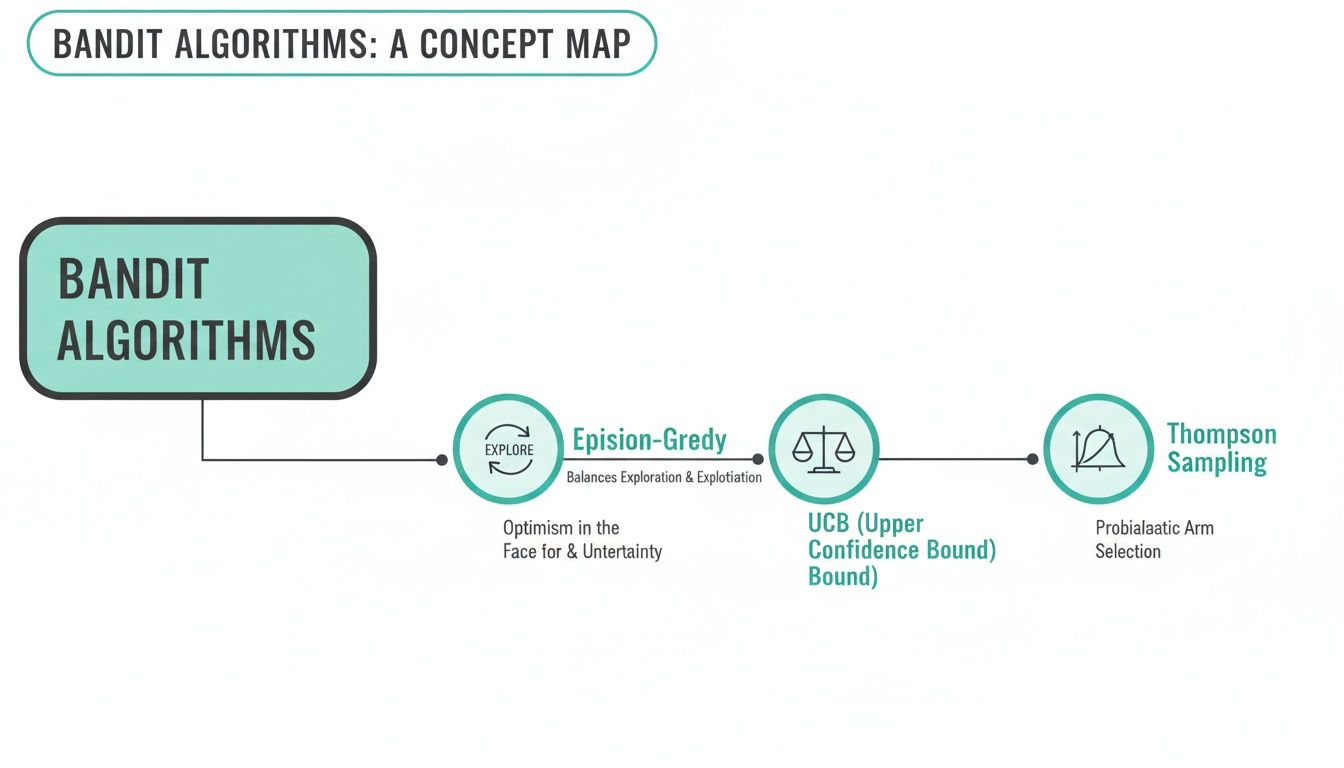

Understanding Core Bandit Algorithms for Personalization

While automatically shifting traffic is a game-changer, the real magic of multi-armed bandit testing happens in how it decides which variation gets more attention. This decision-making is all driven by specific algorithms. Getting a handle on these core algorithms is the key to understanding how platforms like Sitecore AI deliver such intelligent, hands-off personalization.

Our expertise allows enterprises to activate these features, moving them beyond tedious manual analysis and into a world of automated optimization within the Sitecore product portfolio. Let's break down three of the most popular bandit algorithms in a way that makes sense.

Epsilon-Greedy: The Cautious Explorer

The Epsilon-Greedy algorithm is one of the most straightforward and intuitive approaches to multi-armed bandits. It works on a simple two-part logic that balances earning with learning.

- Exploitation (Greedy): Most of the time, the algorithm is "greedy." It looks at which variant has performed best so far—the current champion—and sends the majority of traffic there to maximize immediate results.

- Exploration: For a small, pre-set percentage of the time (this is the "epsilon"), the algorithm deliberately ignores the winner. Instead, it sends a visitor to one of the other, less-proven variations to keep gathering data.

Think of it like finding the best restaurant in a new city. Most nights, you’ll go to the one you already know is great (exploitation). But one night a week (your epsilon), you'll try a new spot just in case you find something even better (exploration). This simple method ensures you don’t get stuck with a good-enough option and miss out on a true winner.

Upper Confidence Bound (UCB): The Optimistic Strategist

The Upper Confidence Bound (UCB) algorithm is a bit more strategic. Instead of exploring at random, it makes a calculated choice based on both performance and uncertainty. It prioritizes variations that are either doing well or haven't been tested enough to be judged fairly.

UCB calculates a score for each variant based on two factors:

- Actual Performance: How well has the variant actually converted so far?

- Uncertainty: How much traffic has this variant seen? A variant with very little data has high uncertainty.

UCB acts like a wise investor. It balances putting money into proven stocks (exploitation) while also making small, calculated bets on new companies that have high potential but are not yet well-understood (exploration).

This approach is incredibly effective because it targets its exploration efforts intelligently. It avoids wasting traffic on variants that have already proven to be poor performers, focusing instead on the ones that still hold untapped potential.

Thompson Sampling: The Bayesian Forecaster

Thompson Sampling is arguably the most advanced and effective of the three. It's a Bayesian algorithm that thinks about the expected reward of each variation as a probability distribution, not just a single number. This is the core algorithm used in many advanced systems, including Sitecore AI, because it’s both robust and efficient.

Here’s how it works: for every new visitor, the algorithm takes a random sample from each variant's probability distribution. It then sends that visitor to whichever variant had the highest sampled value in that specific round. As more data rolls in, these probability distributions get narrower and more accurate, zeroing in on the true conversion rate.

This method naturally balances exploration and exploitation without needing any manual tuning. A new variant starts with a wide, uncertain distribution, giving it a chance to be picked. A consistently high-performing variant develops a distribution skewed toward high rewards, making sure it gets the lion's share of traffic. In our guide to AI personalization in DXP implementations, we cover how this level of automation powers modern customer experiences.

Ultimately, these algorithms are the engines that turn multi-armed bandit testing into a continuous learning system. They empower marketers using Sitecore to automatically serve the best content, ensuring the digital experience is always running at its peak without constant human intervention.

Implementing Multi-Armed Bandits with Sitecore AI

Putting bandit testing into action with Sitecore AI is where the real magic happens. While Sitecore has a long history of powerful A/B testing and personalization, Sitecore AI takes it to the next level by baking automated bandit algorithms right into the platform. This means you can finally step away from manual test reviews and let AI handle the continuous optimization for you.

The image below, from Sitecore's official materials, shows just how central AI has become to its entire offering.

This deep integration of AI is the foundation that allows bandit tests to thrive, delivering far better results than traditional methods.

Defining Goals and KPIs in Sitecore

Before you can optimize anything, you have to define what winning looks like. In Sitecore, this means setting crystal-clear goals and key performance indicators (KPIs). The bandit algorithm will single-mindedly chase whatever goal you give it, so it's critical to get this right.

You need to decide which metric the test should maximize. Common goals in Sitecore include:

- Conversion Rate: The classic metric. What percentage of visitors completes a target action, like a form submission or a purchase?

- Engagement Value: This is Sitecore’s unique score that assigns business value to different visitor actions. It provides a much richer picture of success than just a single conversion point.

- Click-Through Rate (CTR): Perfect for testing headlines, CTAs, or banners where the main goal is to measure initial user interest.

Getting this step right is non-negotiable. If your goal doesn't align with your true business objectives, you'll end up optimizing for the wrong outcome.

Instrumenting and Configuring the Test

With your goals locked in, it’s time to head into Sitecore's Experience Editor to set up the test. This is where you create your different content variations—the "arms" of your bandit. These could be different hero banners, headlines, or even entirely different component renderings.

From there, you’ll jump into Sitecore Personalize, the engine that drives these advanced tests. Here, you connect your content variations to the goal you just defined, specify which audience segments should be included, and turn on the bandit algorithm.

This is a huge shift from a standard A/B test. Instead of manually splitting traffic 50/50, you’re telling the system to manage the traffic for you. You're empowering Sitecore to become your personal optimization agent, learning and redirecting users on the fly to get you the best results.

To make your campaigns even more powerful, you can tie these personalized experiences into other platform capabilities. For example, knowing how to master enterprise marketing automation strategies can help you build truly seamless and effective customer journeys.

Data Requirements and Performance Monitoring

For any multi-armed bandit algorithm to do its job, it needs one thing: data. It needs enough traffic to learn quickly which variations are working and which aren't. While bandits are far more efficient with traffic than A/B tests, they still need a decent volume of visitors to make statistically valid choices. This makes your high-traffic pages the perfect place to start.

Monitoring the test is simple thanks to Sitecore's analytics dashboards. You get a real-time view of how the bandit is performing, which variation is getting the most traffic, and its direct impact on your main KPI. You can literally watch the system exploit the winner, giving you clear proof of your optimization efforts in action.

This concept map shows the different logical approaches these algorithms can take, from the simple explore/exploit model of Epsilon-Greedy to the probability-based decisions of Thompson Sampling. Ultimately, Sitecore AI gives you a clear and powerful way to turn your website into a self-optimizing machine.

Statistical Foundations and Automated Decision-Making

To let an automated system call the shots on key business decisions, you need to trust the engine behind it. The real power of multi-armed bandit testing isn't magic; it’s rooted in solid statistical models that make reliable, automated decisions possible. This is what allows platforms like Sitecore AI to graduate from simple tests to become continuous learning machines.

Before we get into the heavy-hitting stats that power bandit algorithms, it helps to have a firm grip on concepts like what is statistical significance in A/B testing. That knowledge gives you a baseline for appreciating just how differently—and how much faster—bandits automate the decision-making process.

At their core, modern bandit systems rely on two things: smart sampling methods and even smarter change detection. These two work together, constantly checking how each variant is doing and shifting traffic on the fly.

The Role of Thompson Sampling and Change Detection

The statistical backbone of many advanced multi-armed bandit systems is built on Thompson sampling and change detection algorithms. Unlike A/B tests that stick to a fixed traffic split, bandits use Thompson sampling to dynamically send more users to variants that are proving their worth. The math behind this is serious stuff; algorithms might use CUSUM (Cumulative Sum Control) tests to quickly spot when a variant's performance has genuinely shifted and declare a winner.

This constant vigilance is what makes the system so responsive and trustworthy. It's the kind of solution we implement for clients on DXP platforms like Sitecore, giving them an optimization engine that’s not just fast, but also statistically sound enough to drive real conversions.

In a bandit test, confidence plays a different role. As soon as one variant proves its superiority and hits a set threshold, like 95% confidence, the system can automatically send 100% of future traffic its way. This immediate action cuts out any delay in decision-making and maximizes your returns right away, perfectly illustrating the "always-on" optimization advantage.

'Warming Up' Bandits with Historical Data

One of the most powerful features for enterprise use is the ability to "warm start" a bandit test. Instead of starting from scratch (a "cold start"), you can feed the algorithm historical performance data. This gives the system a huge head start and drastically cuts down on the initial regret—the missed conversions you'd get from showing users a poorly performing variant.

Think about launching a new headline test on a landing page. By giving the bandit data on how similar headlines performed in the past, you're handing it initial probabilities to work with.

- Faster Learning: The system doesn't waste traffic relearning things you already know.

- Reduced Initial Regret: It starts favoring the stronger variants almost immediately.

- Improved Efficiency: The whole process gets you to a better-performing experience much faster.

This approach reinforces that bandit testing isn't just a one-and-done experiment but a living, continuous learning cycle. It's a perfect match for the dynamic nature of platforms like Sitecore and SharePoint, where content and user behavior are in constant flux. Knowing how to apply this is a game-changer, and you can learn more about measuring digital marketing effectiveness in our guide. This perpetual cycle of learning and acting is exactly what makes multi-armed bandits an essential tool for any modern digital experience strategy.

Enterprise Considerations for Bandit Testing at Scale

Running a single multi-armed bandit test is straightforward. But embedding it as a core capability across a large enterprise? That’s an entirely different beast. Scaling multi-armed bandit testing demands a solid strategy that balances innovation with control, covering everything from your tech stack to legal compliance.

It’s a discipline we’ve honed while implementing optimization solutions for both public-facing Sitecore platforms and internal SharePoint intranets. At the enterprise level, your framework has to be built for massive traffic volumes and dozens of concurrent tests without dragging down performance. This is especially true for platforms like Sitecore AI, where optimization is always on.

Scalability and Governance

As you scale, robust governance becomes non-negotiable. Without clear rules of the road, teams can end up running conflicting tests, optimizing for contradictory goals, or introducing biases that make the results worthless. A huge part of our work is establishing a center of excellence that creates clear processes for test creation, goal alignment, and interpreting outcomes, particularly within the Sitecore ecosystem.

This governance model also needs to solve the “cold start” problem, which happens when a new variant has zero performance data. A good strategy makes sure new ideas get a fair shot to prove their value instead of being immediately sidelined by proven winners. This structure prevents algorithmic bias and encourages real innovation.

An enterprise bandit program isn't just about running more tests; it's about building a scalable, governed system that produces trustworthy, business-aligned results. This turns optimization from a siloed activity into a unified organizational capability.

Integrating Bandits into a Composable Architecture

Modern enterprises are increasingly adopting composable DXP architectures, a philosophy central to Sitecore's product strategy. In this setup, multi-armed bandit testing acts as a shared service that can optimize experiences on any channel—your website, mobile app, email campaigns, or even a SharePoint-based employee portal.

Think about these applications:

- Sitecore: A bandit service can automatically optimize homepage hero banners, product recommendations on e-commerce pages, and CTA buttons in marketing campaigns powered by products like Sitecore Personalize.

- SharePoint: On an intranet, the same service could test different headlines for company announcements, figure out the best placement for quick links, or personalize departmental content to boost employee engagement.

This composable approach, a core part of our delivery philosophy, ensures your investment in optimization technology pays dividends across your entire digital presence.

Of course, all of this must be done with a close eye on legal and privacy regulations. Using bandit algorithms to personalize experiences means handling data carefully to comply with GDPR and CCPA. The system has to be built to respect user consent and anonymize data when needed, ensuring your optimization efforts never break customer trust or legal rules.

Frequently Asked Questions About Multi-Armed Bandit Testing

When teams first look into multi-armed bandits, a few key questions always come up. Here are some straightforward answers based on our experience implementing bandits on platforms like Sitecore and SharePoint.

When Should I Use Bandits Instead of A/B Testing?

The choice between bandits and A/B testing really boils down to a single question: are you trying to learn or earn?

Choose multi-armed bandits when your goal is to earn as much as possible, right now. Think short-term campaigns, promotional offers, or headline tests where you need to maximize conversions in real time. Bandits are built to find and exploit the winning option as quickly as possible.

Stick with A/B testing when your goal is to learn for the long run. If you're making foundational changes like a full site redesign or testing a new user journey, you need clean data on all variations. The fixed traffic split of an A/B test gives you the statistical confidence to make big strategic decisions.

How Much Traffic Do I Need for Effective Bandit Testing?

There's no single magic number, but multi-armed bandits definitely perform best on pages with a healthy amount of traffic. The more data the algorithm gets, the faster it can identify the winner and shift traffic accordingly.

But that doesn't mean they're useless on medium-traffic sites. In fact, their "always be optimizing" nature can be a huge advantage. Bandits work to get the most value from every single visitor, meaning you're always showing the best-performing option available at that moment and minimizing lost conversions, even with a smaller audience.

Can Multi-Armed Bandit Testing Be Used in SharePoint?

Absolutely. The logic behind multi-armed bandit testing is totally platform-agnostic, and it's surprisingly effective for improving employee engagement and productivity inside a SharePoint intranet. Our expertise allows us to use bandit algorithms to help optimize corporate intranets all the time.

For example, a bandit algorithm can test different headlines for a company news article to see which one gets the most clicks from employees. Or it could figure out the best placement for a button linking to a critical HR document, ensuring that essential information is easy to find and use.

By applying bandits to intranet navigation, content highlights, or calls to action, you can directly measure and improve how employees interact with the platform. It's a powerful way to make the intranet a more valuable tool for daily work.

Ready to transform your digital strategy with intelligent, automated optimization? The experts at Kogifi specialize in implementing advanced solutions on Sitecore, SharePoint, and other leading DXP platforms to drive real business results. Discover how we can help you at https://www.kogifi.com.