Teams asking what is data cloud aren't asking for a textbook definition. They're dealing with a more immediate problem.

Marketing has journey data in one tool, commerce data in another, CRM records in Salesforce or Dynamics, service history in a ticketing platform, and product or contract data locked inside ERP. Sitecore sits on top of that mess and gets blamed when personalization feels shallow. IT then gets asked why the DXP can't "just know the customer" across channels.

It can't, at least not reliably, if the data model underneath is fragmented.

That's why the conversation around data cloud matters. In practice, a data cloud isn't just another repository. It's the operational layer that brings disconnected customer and business data into one governed system so platforms like Sitecore can personalize, automate, and activate experiences in real time.

Your Data Is an Asset Why Is It Still in Silos

A familiar pattern shows up in DXP programs. The website team has analytics. The CRM team has lead and account data. E-commerce owns orders and basket behavior. Service teams hold complaint history, renewals, and support signals. Nobody has the whole picture at the moment it matters.

That creates a false sense of maturity. A business may have strong tools and still deliver generic experiences because the systems don't talk in a way that supports decisioning.

Why silos break personalization

Sitecore can render different content to different audiences. It can connect with AI services. It can orchestrate journeys. But if the input data arrives late, arrives inconsistently, or isn't stitched to a durable identity, the output is weak.

Common symptoms look like this:

- Segments drift out of date: A customer buys yesterday, but today's campaign still treats them like a prospect.

- Profiles stay partial: Web behavior exists, but purchase history and service context don't.

- Teams duplicate work: Marketing creates audience logic in one tool while analytics rebuilds it somewhere else.

- Trust drops quickly: Once leaders see conflicting metrics across systems, they stop relying on personalization logic.

The urgency isn't hypothetical. The cloud market supporting this architecture reached $912.77 billion in 2025 and is projected to reach $1.614 trillion by 2030, while global data is expected to hit 200 zettabytes by 2025, with 94% of enterprises using cloud services and 60% of business data already stored in the cloud, according to these cloud computing statistics.

Why this problem is getting harder

The old answer was to move selected records into a reporting database and refresh nightly. That still works for historical dashboards. It doesn't work for modern DXP use cases where content, offers, search, and AI prompts should respond to current behavior.

Unstructured content also adds pressure. Contracts, PDFs, support notes, transcripts, and product sheets often contain the context needed for better targeting, but most stacks don't extract that information cleanly. In those cases, teams often need tooling such as an AI-powered data extraction engine to turn messy documents into usable fields before they can activate anything downstream.

Disconnected systems don't just slow reporting. They reduce the quality of every decision made in the DXP.

A data cloud addresses that by making customer, content, transaction, and operational data available through one governed layer. If you're trying to solve identity, segmentation, and activation together, it helps to start with a practical view of customer data integration solutions rather than treating the website as the center of the universe.

Beyond the Buzzword A Practical Definition of Data Cloud

The simplest answer to what is data cloud is this.

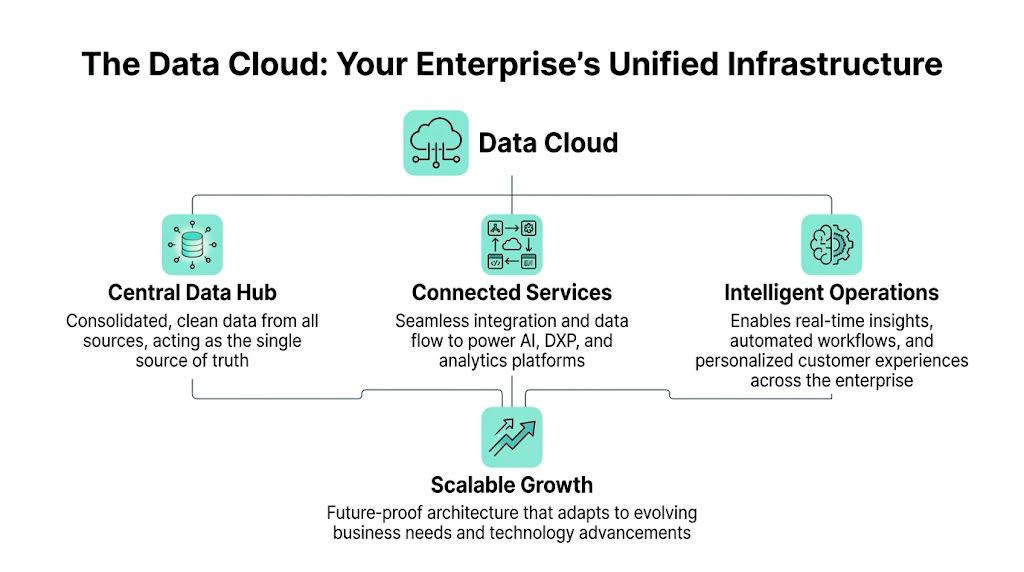

A data cloud is an open, cloud-based data infrastructure that unifies structured, unstructured, and semi-structured data from on-premises, public, private, and hybrid environments into a secure, scalable platform for real-time integration and activation. It isn't only a place to store data. It's the operating model for making that data useful across AI, analytics, CRM, and DXP.

Think of it as enterprise coordination

A database answers narrow questions. A data cloud coordinates enterprise data flow.

If a database is a well-organized room, a data cloud is the circulation system for the entire building. It pulls data in, stores it appropriately, processes it into something trustworthy, and then pushes it into the applications that need to act on it.

The core operating pattern has four parts:

- Ingestion: APIs, connectors, and pipelines pull data from CRM, ERP, e-commerce, CMS, analytics tools, service platforms, and external feeds.

- Storage: The platform holds or references data in forms suited to different workloads, including structured and unstructured content.

- Processing: Identity resolution, enrichment, transformation, and model-ready preparation happen here.

- Activation: The cleaned and governed data is used in Sitecore, BI tools, marketing automation, service workflows, and AI models.

Why architecture matters more than the label

Modern platforms increasingly avoid copying everything into one monolithic store. One important pattern is Zero Copy, where data remains in its original environment while the platform accesses it in near real time. According to Google's overview of data cloud architecture, modern approaches like Zero Copy can reduce data transfer costs by up to 50%, and implementations focused on data mapping can produce a 30 to 40% ROI uplift in personalization for DXPs such as Sitecore and AEM by eliminating up to 60% of data silos. The same source describes the ingestion, storage, processing, and activation model directly in its explanation of what a data cloud is.

That matters for leaders who don't want another expensive duplication layer.

Practical rule: If your architecture creates one more copy of everything without improving identity, governance, or activation, it isn't solving the real problem.

What good implementation actually looks like

In working DXP environments, the strongest data cloud designs share a few traits:

| Capability | What good looks like |

|---|---|

| Governance | Clear ownership, consent handling, access control, and lineage |

| Identity | Customer records reconciled across behavior, transaction, and CRM signals |

| Activation | Sitecore and other downstream tools can consume audiences and attributes quickly |

| Flexibility | Teams can add sources without redesigning the whole platform |

This is also where many projects fail. Teams buy a platform before they define identity rules, event models, or canonical business entities. They end up with a technically integrated stack and an operationally confusing one.

A more grounded path is to treat the platform as part of a broader data management platform strategy. The name matters less than whether the architecture supports real-time access, governance, and downstream action.

Data Cloud vs Lake Warehouse and Mesh

Many teams use these terms interchangeably. That creates bad design decisions.

A warehouse, a lake, a mesh, and a data cloud can all exist in the same enterprise. They solve different problems. For Sitecore and broader DXP work, the confusion usually starts when a reporting platform gets expected to behave like an activation platform.

What each model is best at

A data warehouse is optimized for structured, curated data and consistent reporting. Finance, revenue operations, and executive dashboards often rely on it because query performance and schema discipline matter.

A data lake is built for storing large volumes of raw data in native formats. It's useful when data science teams need flexibility or when the organization wants to retain logs, files, or semi-structured records before deciding how to model them.

A data mesh is less a platform than an operating model. It pushes ownership to domains, so marketing owns marketing data products, commerce owns commerce data products, and so on. This can work well in large organizations with strong engineering maturity, but it doesn't automatically create a unified customer view.

A data cloud focuses on unifying, governing, and activating data across environments. That's why it fits DXP work so well. The point isn't only storage. The point is operational access to trusted data for personalization, journey orchestration, AI, and analytics.

Data architecture comparison

| Attribute | Data Warehouse | Data Lake | Data Mesh | Data Cloud |

|---|---|---|---|---|

| Primary purpose | Structured reporting and BI | Raw data storage and exploration | Decentralized data ownership | Unified access and activation across systems |

| Typical data shape | Mostly structured | Structured, semi-structured, unstructured | Varies by domain | Structured, semi-structured, unstructured |

| Main users | Analysts, BI teams, finance | Data engineers, data scientists | Domain teams, platform teams | Marketing, IT, analytics, AI, DXP teams |

| Data ownership | Centralized | Centralized or platform-led | Federated by domain | Central governance with broad consumption |

| Strength | Reliable reporting | Flexibility at scale | Domain autonomy | Real-time integration plus activation |

| Weakness | Slower for operational activation | Can become a dumping ground | Governance complexity across domains | Requires strong identity and governance design |

| Fit for Sitecore personalization | Limited by itself | Indirect | Depends on execution maturity | Strong fit when connected to decisioning and content delivery |

| Common failure mode | Great reports, poor activation | Massive storage, little usability | Inconsistent standards | Too much integration, not enough business modeling |

What works in practice

Most enterprise stacks don't need an ideological choice. They need clear roles.

A common and sensible pattern looks like this:

- Warehouse for reporting: Revenue, pipeline, commercial performance, board-level BI.

- Lake for raw and large-scale inputs: Logs, files, transcripts, historical event stores.

- Mesh where the organization is mature enough: Domain-owned products with shared standards.

- Data cloud for activation: Customer profiles, audience building, real-time signals, AI-ready data services feeding Sitecore and adjacent systems.

The wrong question is which architecture wins. The right question is which architecture owns reporting, raw storage, domain stewardship, and experience activation.

Where DXP teams get tripped up

The website usually becomes the political center of the discussion because it's visible. But website personalization is a downstream effect, not the source of truth.

If the team points Sitecore directly at isolated operational systems, performance, consistency, and governance suffer. If they rely only on a warehouse, the profiles often arrive too late for current-context decisioning. If they embrace mesh without common identity and taxonomy, the customer view fragments again under a different name.

For DXP programs, the data cloud earns its place when it acts as the activation layer between enterprise data and customer experience systems. That's the practical distinction that matters.

Powering Sitecore AI and DXP with Unified Data

A Sitecore program usually stalls at the same point. The team has content, components, and campaign plans ready to go, but the DXP still cannot answer simple questions in real time. Is this visitor already a customer. Are they stuck in an open service issue. Did they abandon a high-value product last week. Are they part of an account that renews next month.

Without that context, personalization stays surface-level. Sitecore can react to page views and clicks, but it cannot make high-confidence decisions across the customer journey.

What unified data changes inside Sitecore

In a composable DXP, Sitecore should not become the place where customer truth is assembled from scratch. It should receive a usable profile, current event streams, and governed attributes from a data cloud that has already handled identity resolution, normalization, and policy controls.

That changes what the platform can do day to day.

A well-implemented data cloud gives Sitecore access to:

- Identity context: Anonymous and known activity tied together where consent and matching rules allow it.

- Commercial context: Orders, subscriptions, pricing tier, returns, renewals, and product interest.

- Service context: Cases, satisfaction signals, support history, and issue severity.

- Content and product context: Taxonomy, metadata, asset classifications, document-derived entities, and catalog attributes.

With those inputs, Sitecore CDP, Personalize, and Search stop working like disconnected features. They start operating from the same customer and content understanding.

Why AI performance rises or falls on data quality

AI features inside a DXP depend less on model novelty than on input quality. Bad identity stitching, stale records, missing consent flags, and weak taxonomy design produce poor recommendations and irrelevant content, no matter how polished the interface looks.

I see this in architecture reviews all the time. Teams want AI-generated variants, next-best-action logic, or smarter search, but the profile feeding those services still lives across CRM, commerce, support, and web analytics with different identifiers and conflicting definitions. The model is rarely the first problem.

For Sitecore leaders, the practical takeaway is simple. AI-driven personalization works when the profile is current, the attributes are meaningful, and the event stream reflects what happened recently enough to influence the next interaction. That is the operating role of the data cloud.

Better personalization starts with data that Sitecore can trust at decision time.

A practical Sitecore activation pattern

The architecture that works in production is usually straightforward, but it requires discipline across both marketing and IT.

Collect high-value signals from source systems

Bring in web behavior, form activity, commerce events, CRM changes, campaign responses, service interactions, and selected account or contract data.Resolve identity and standardize meaning

Match records across systems, define shared attributes, and normalize events so "customer," "lead," "subscriber," and "account" do not mean different things in every application.Publish profiles and audiences to Sitecore

Feed Sitecore Personalize, CDP, Search, and adjacent services with stable traits and event streams instead of one-off exports.Activate decisions in channel

Use that profile for homepage variants, offer suppression, cross-sell logic, post-login experiences, search ranking, and journey orchestration.Send outcomes back for improvement

Capture response data, conversions, drop-off points, and engagement changes so the next decision is based on actual performance.

That is how broad guidance on AI-enhanced personalization in DXPs becomes executable inside a Sitecore estate. The data cloud handles profile assembly and governance. Sitecore uses that context to decide what to show, to whom, and at what moment.

SharePoint benefits from the same foundation

The same pattern improves SharePoint in large intranets and knowledge-heavy environments.

If employee profiles, department structures, permissions, document metadata, learning history, and search behavior all sit in separate systems, the intranet becomes a content repository with weak discovery. Unified data improves relevance because search and content targeting can reflect role, region, business unit, current tasks, and previous activity.

That has practical value in onboarding, policy communication, self-service support, and knowledge reuse. The delivery channel changes. The requirement for clean, connected context does not.

A short product overview helps illustrate how DXP capability and data capability need to work together:

What fails in real implementations

A few patterns create avoidable problems:

- Sending every source system directly into Sitecore: Integration count grows fast, ownership gets muddy, and change management becomes expensive.

- Using clickstream data as the full customer profile: Behavioral data is useful, but it lacks commercial, service, and account context.

- Deferring consent and governance decisions: Audience activation slows down once legal, security, or regional compliance issues surface late.

- Leaving unstructured content unmanaged: Search, recommendations, and AI prompts degrade quickly when metadata and extraction rules are weak.

The best Sitecore implementations keep responsibilities clear. The data cloud prepares and governs the profile. Sitecore turns that profile into relevant experiences.

Your Roadmap for a Successful Data Cloud Migration

A data cloud migration shouldn't begin with platform demos. It should begin with a narrow business outcome and a clear operating model.

If the target is vague, the implementation drifts into plumbing. Teams integrate systems, move records, and still can't answer whether the experience got better.

Start with the use case, not the stack

A good first workshop includes both marketing and IT. The point is to agree on what the business wants to activate first.

Useful examples include:

- Improve journey relevance: Better segmentation and content targeting inside Sitecore.

- Reduce profile fragmentation: One customer view across web, CRM, service, and commerce.

- Support AI safely: Better prompts, recommendations, and automation from governed data.

- Modernize intranet discovery: More relevant SharePoint search and content delivery.

From there, define what data is required to make the use case real. Many teams skip this and jump straight into connector counts.

The migration path that usually works

Discovery and audit

Inventory the systems, but don't stop at application names. Inspect entities, identifiers, data quality, consent status, event definitions, latency expectations, and ownership.

Questions worth answering early:

- Which systems define customer identity

- Where does consent live

- Which events are required for personalization

- What unstructured sources need extraction or enrichment

- Which fields are authoritative when systems disagree

Data model and governance

Here, programs either become durable or become expensive.

Define a canonical model for core entities such as customer, account, product, order, subscription, case, content asset, and consent. Then set rules for stewardship, retention, access, and lineage.

Governance isn't a compliance afterthought. It's what lets marketing move faster without breaking trust.

Integration and activation design

Choose how the data cloud will ingest, reference, process, and expose data. Some organizations prefer a tightly coupled vendor ecosystem. Others need a more flexible multi-cloud pattern because their stack is already diverse.

For Sitecore programs, the activation design needs to answer practical questions:

| Decision area | What to decide |

|---|---|

| Identity | Matching logic, profile persistence, anonymous-to-known transitions |

| Event model | Standard names, attributes, timestamps, business meaning |

| Activation path | How audiences and attributes reach Sitecore and adjacent tools |

| Latency | What must happen in near real time versus batch |

| Security | Access policies, encryption, auditability, regional controls |

If the broader modernization effort includes infrastructure and operating model shifts, a more complete enterprise cloud migration strategy helps frame the data work in business terms rather than as a standalone integration project.

Vendor selection questions that matter

Most checklists are too generic. For DXP leaders, these are the practical questions:

- Can it unify structured and unstructured data without turning implementation into a custom engineering project

- Does it support real-time or near-real-time access where the use case demands it

- How does identity resolution work across anonymous and known profiles

- Can it govern consent, access, and lineage cleanly

- How easily can Sitecore, SharePoint, CRM, analytics, and service platforms consume the outputs

- What does the operating model look like after launch

What causes projects to stall

The biggest delays usually come from non-technical issues.

Business teams don't agree on what a customer record is. Regions use different taxonomies. Legal reviews happen after integration begins. Content teams aren't involved in metadata design. Support teams are left out even though service signals matter to experience.

The fix is straightforward. Make the migration a shared operating model initiative. The technology is necessary, but it isn't the hard part.

From Cost Center to Revenue Driver Data Cloud Use Cases

A data cloud earns budget approval when it changes commercial outcomes, not when it adds another place to store records. In DXP programs, the fastest proof usually comes from fixing decisions that were already underperforming because customer context was split across systems.

That matters in Sitecore more than teams often expect. Personalization rules, AI-assisted recommendations, journey orchestration, and experimentation all improve when the platform can work from a current profile instead of partial channel data.

Three use cases that create visible value

1. Smarter audience activation in Sitecore

This is usually the first place leaders see a return.

When campaign data, CRM history, product signals, and on-site behavior are available in one operational layer, marketers can stop building static audiences in spreadsheets and start activating based on current conditions. Existing customers can be excluded from acquisition spend. High-intent accounts can be routed into different journeys. Content can change based on service history, product ownership, region, or buying stage instead of just page visits.

For Sitecore teams, that changes the role of personalization. It stops being a thin rules engine on top of web analytics and becomes a decisioning layer fed by real business context. That is also the groundwork AI needs. Models produce better recommendations and next-best actions when the underlying profile includes both behavioral and transactional signals.

2. Revenue signals from service interactions

Support data is often left out of experience architecture. That is a mistake.

Ticket volume, recurring issue types, escalation paths, CSAT trends, and time-to-resolution often tell you more about expansion risk or renewal timing than campaign engagement does. A customer who opens three tickets after onboarding should not receive the same upsell journey as a healthy account. A product line with repeated support friction should influence what content gets promoted, what offer gets delayed, and which accounts get routed to human follow-up.

Teams that want a practical view of this should look at approaches for deriving revenue intelligence from support data, because support platforms often hold the clearest post-sale signal for churn prevention and growth planning.

3. Better recommendations and next-step guidance

Recommendations improve when they use more than clickstream data.

In commerce, that means combining browsing behavior with order history, stock position, product compatibility, contract status, and service sentiment. In B2B portals, it can mean showing implementation content to a new customer, renewal guidance to an at-risk account, or cross-sell options only when support and adoption signals are healthy.

The practical gain is relevance. The strategic gain is trust. Users stop seeing repetitive suggestions that ignore what the business already knows about them.

Why finance teams should care

The financial drag of fragmented data rarely appears as one large line item. It shows up in rework, slower execution, and preventable waste across teams.

- Duplicate integration work: Teams recreate the same joins, mappings, and business logic in different tools.

- Slow campaign cycles: Analysts and operations teams prepare segments manually before launches.

- Wasted media and messaging: Outdated profiles put the wrong people into paid and owned journeys.

- Weak AI outputs: Models and copilots generate lower-quality recommendations because the context is incomplete or stale.

Once the data cloud is operating properly, the investment starts to look less like platform overhead and more like decision infrastructure.

Revenue impact often appears first as fewer bad decisions, fewer missed signals, and less operational waste.

Where the value shows up first

Early wins are usually straightforward. Better suppression logic. Cleaner lead routing. More relevant content blocks in Sitecore. Improved search and recommendation quality. Faster testing cycles because audience and profile data no longer need manual preparation.

These are not headline-grabbing AI projects. They are the work that makes AI-driven personalization credible in a composable DXP. Without that foundation, Sitecore can publish content and trigger journeys, but it cannot reliably decide what should happen next for a specific customer.

Start Your Data-Driven DXP Journey with Kogifi

What is data cloud, in the context of modern digital experience, isn't a theoretical question. It's a design decision.

If the business wants Sitecore to deliver meaningful personalization, AI-assisted decisioning, and connected omnichannel journeys, it needs a governed data foundation that can unify signals across systems. If the business wants SharePoint to behave like a useful knowledge environment instead of a document archive, it needs the same thing.

The real takeaway

A data cloud isn't just another data store. It's the layer that lets experience platforms work with complete context.

That matters because the gap between a technically functional DXP and a commercially effective DXP is usually the data layer underneath it. The CMS can publish. The journey tool can automate. The AI service can generate. None of them can compensate for fragmented identity, weak governance, or stale activation paths.

Why implementation depth matters

This kind of work sits at the intersection of architecture, content operations, AI readiness, governance, and platform execution. That's why Sitecore expertise matters so much here.

A team needs to understand not just data pipelines, but also how profile attributes affect personalization logic, how taxonomy influences content relevance, how event design impacts experimentation, and how composable services behave under enterprise traffic and governance demands.

The same is true for SharePoint solutions. Personalization, knowledge discovery, multilingual content, and role-based access all depend on a clean and well-managed data model.

What strong delivery looks like

For enterprise organizations, especially global brands and teams operating across multiple regions, strong delivery usually means:

- Platform fluency: Deep understanding of Sitecore AI, composable DXP patterns, and SharePoint architecture.

- Practical governance: Security, consent, access, and content operations designed into the solution.

- Operational support: The platform doesn't stop needing care after launch.

- Business alignment: Architecture decisions tied to personalization, engagement, and ROI goals.

Kogifi brings that mix. With over 10 years of experience and more than 50 clients worldwide, the company supports enterprise DXP and CMS programs across Sitecore AI, AEM, and SharePoint, along with updates, audits, implementations, and 24/7 support, based on the publisher information provided in this brief.

If you're defining a data strategy around Sitecore, AI personalization, SharePoint, or a broader composable DXP program, the most important move is to stop treating data as an integration side project. It is the foundation.

If you're ready to turn fragmented customer and content data into a stronger Sitecore or SharePoint experience, talk to Kogifi about building the data foundation your DXP needs.