A/B/n testing is an expansion of standard A/B testing. Instead of comparing just two versions of a webpage (A and B), A/B/n testing lets you test three or more distinct variations (A, B, C, ...n) against each other simultaneously. This approach accelerates the optimization cycle, helping you discover high-impact improvements and validate strategic hypotheses much faster, especially within sophisticated platforms like Sitecore.

Moving Beyond Simple A/B Tests

Relying on standard A/B testing can sometimes feel like trying to navigate a complex digital ecosystem with a map that only shows two roads. While it’s a solid starting point for optimization, it often falls short of capturing the full picture, especially for enterprise platforms built on Sitecore. This is where A/B/n testing comes in, serving as a powerful upgrade for your optimization strategy.

It’s not just about adding more options to the mix. It's about unlocking entirely new pathways to your conversion goals. A/B/n testing, especially when powered by Sitecore AI, allows you to test several completely different experiences at the same time, helping you find breakthrough improvements instead of just settling for minor tweaks.

Why A/B/n Testing Is a Strategic Imperative

Data-driven optimization is no longer a "nice-to-have"—it's a core business function. For businesses running on sophisticated Digital Experience Platforms (DXPs) like Sitecore, A/B/n testing is a native capability that delivers immense value. It empowers marketing and product teams to validate bold ideas quickly and make faster, more confident decisions based on real user behavior, directly impacting the bottom line.

Instead of debating which of three new homepage concepts is best, you can test all three in a real-world environment using Sitecore's integrated tools. This data-first approach removes internal biases and lets customer behavior dictate the winning strategy.

The Advantage for Enterprise Platforms

A/B/n testing truly shines within integrated platforms like Sitecore AI or even highly customized SharePoint solutions. These systems often handle huge amounts of content and complex user journeys, where small, isolated A/B tests can struggle to produce meaningful results.

In contrast, A/B/n testing gives marketers the power to:

- Test radically different user experiences: Compare a promotions-focused page against a content-rich educational layout and a minimalist design all at once.

- Accelerate learning cycles: Gather insights from multiple hypotheses within a single experiment, saving valuable time and resources.

- Identify breakthrough performance: Move beyond incremental gains and uncover strategies that deliver significant performance lifts.

Before diving deeper, it's a good idea to make sure you're comfortable with understanding the fundamentals of A/B testing. This powerful method works hand-in-hand with other optimization techniques, and you can explore the broader landscape in our guide to website personalization tools.

Choosing Your Experimentation Method

Picking the right experimentation method is the difference between getting clear, actionable results and getting noise. The choice between A/B, A/B/n, and multivariate testing really boils down to what you want to learn and how much traffic you have to work with. Let's make this real with a common business scenario.

Imagine you want to improve an e-commerce product page. A standard A/B test is the most straightforward approach. You could test a red "Add to Cart" button against a green one. It’s a simple, focused experiment designed to answer one question: which color converts better? The upside is that it requires the least amount of traffic to get a reliable answer.

But let’s be honest, a simple button color change is rarely going to drive a huge business uplift. This is where A/B/n testing comes in, giving you a much more strategic edge. Instead of just tweaking one small element, you could test three radically different page layouts:

- Variation A: Your current page design (the control).

- Variation B: A new layout that’s all about big, beautiful product imagery and customer photos.

- Variation C: Another new layout that prioritizes deep technical specs, comparison charts, and expert reviews.

This A/B/n test isn't just asking which button is better; it's asking which overall strategy connects with your audience. Do they respond to visual storytelling or to hard data? The answer gives you a much deeper insight that can shape your entire site design, not just one page.

A/B/n vs. Multivariate Testing

Now, what about multivariate testing (MVT)? Using that same product page, an MVT approach would test multiple element combinations all at once. For example, you might test two different headlines, three different hero images, and two different calls-to-action. This instantly creates 2 x 3 x 2 = 12 different combinations that are tested against each other.

Multivariate testing is incredibly powerful for figuring out how different elements interact with one another. But it comes with a huge price tag: traffic. Your audience is split across so many combinations that each one gets only a tiny slice of visitors. This makes reaching statistically significant results a slow and often impractical process.

For that reason, MVT is often not the right choice for most enterprise use cases unless a site has massive traffic volumes. A/B/n testing, on the other hand, strikes the perfect balance. It lets you run bold, strategic tests without the insane traffic requirements of MVT, making it the go-to choice for most enterprise teams using a DXP like Sitecore.

The Sweet Spot for Enterprise DXPs

For organizations managing their digital experiences on platforms like Sitecore, A/B/n testing hits a strategic sweet spot. It delivers rich, directional insights that can steer major design and content decisions, all without the paralyzing complexity of a full multivariate experiment. It's simply the most actionable way to drive meaningful, substantial change.

While more complex methods exist, the data shows that practicality usually wins. Standard A/B tests still account for 67.6% of all experiments, showing a clear preference for direct, unambiguous comparisons. This really underlines the power of straightforward approaches like A/B testing and its logical extension, a b n testing, which deliver results you can actually use. You can review the full statistics about experimentation method popularity on Convert.com.

By testing distinct, complete concepts against each other, Sitecore and SharePoint users can validate entire strategies. This accelerates learning and ensures that development and design resources are invested in ideas already proven to resonate with customers.

This is a far more efficient path to innovation than getting stuck in a cycle of endlessly tweaking minor elements. For those looking to push the boundaries even further, you can explore how these tests relate to more advanced automated methods. If you're interested, you can learn more about how multi-armed bandit testing compares to traditional A/B tests in our related guide.

How to Design a High-Impact A/B/n Experiment

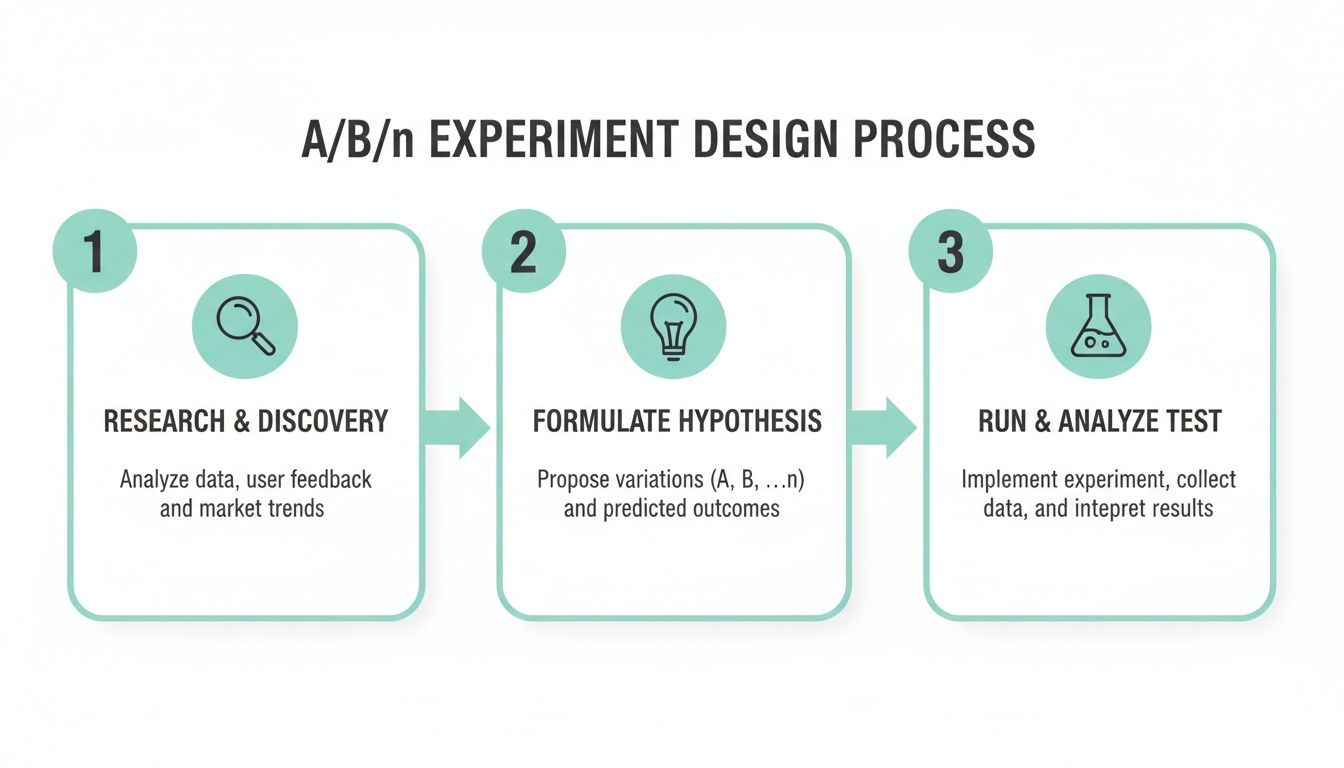

A great experiment doesn't start when you open Sitecore’s Experience Editor or set up a test in a SharePoint environment. It begins much earlier. The entire foundation of a powerful a b n testing campaign rests on a solid, data-backed hypothesis. Just guessing what might work is a recipe for wasted time and muddy results.

Instead, your process should kick off with a deep dive into your existing user data. Get familiar with your analytics, pour over heatmaps, and watch session recordings to find where real users are hitting roadblocks. Where are visitors getting stuck? Which pages have shockingly high exit rates? This detective work is what moves you from assumptions to evidence-based optimization.

Formulating a Data-Driven Hypothesis

Once you’ve pinpointed a problem, you can build a clear, testable hypothesis. A weak hypothesis is vague—something like, "We want to improve the homepage." A strong one is specific, measurable, and directly connected to a business goal.

For instance, let's say you noticed a high drop-off rate on your mobile checkout page. Your hypothesis might look like this:

"We believe that replacing our multi-step mobile checkout process with a single-page accordion layout will reduce cart abandonment by 15% for mobile users because it simplifies the user journey and reduces perceived effort."

This hypothesis clearly lays out the proposed change, the expected outcome, and the "why" behind it. It gives you a perfect roadmap for designing your variations and, later, analyzing the results.

Defining Metrics and Calculating Sample Size

With your hypothesis locked in, it's time to define your key performance indicators (KPIs). Every experiment needs one primary success metric that directly measures whether you hit your goal. In our checkout example, the primary metric would be the cart completion rate.

You should also track secondary metrics to catch any unintended side effects and get a fuller picture. These could include:

- Average Order Value (AOV): Did the simpler checkout encourage people to spend more or less?

- Time to Completion: How much faster did users get through the new checkout design?

- Engagement with Upsells: Did the new layout change how users interacted with product recommendations?

Before you launch anything in Sitecore or another DXP, you absolutely must calculate the required sample size. This tells you how many visitors need to see each variation to get statistically significant results—results you can actually trust, not just random noise. Plenty of online calculators can help with this, ensuring your test is set up to deliver reliable insights.

Pre-Flight Checklist for A/B/n Testing

To make sure your tests are built on solid ground, always use a pre-flight checklist. This forces you to cover all the critical design stages before a single line of code is written. A well-designed experiment is one that’s planned with rigor from the start, which is a key part of any good website conversion rate optimization strategy. It sets the stage for actionable results that create real business value.

Ready to move from a solid hypothesis to a live experiment? This is where an enterprise DXP like Sitecore really shows its strength. Running a sophisticated A/B/n testing campaign isn’t some clunky add-on; it's a core feature baked right into the platform. It’s designed to give marketing teams direct control over the entire optimization process.

The whole journey kicks off inside the Sitecore Experience Platform, specifically within the Experience Editor. This visual, on-page editing tool lets you create multiple versions of a page without roping in a developer for every little tweak. This is how you bring your test variations to life, whether you're trying out different hero banners, calls-to-action, or completely new page layouts.

Before you jump into the technical setup, remember that every successful experiment starts with a structured design process.

This process highlights a crucial point: a great test isn't just about the tool. It's built on a foundation of solid research and a clear, testable hypothesis.

Setting Up Your A/B/n Test in Sitecore

Once your page variations are ready in the Experience Editor, it’s time to configure the test itself. This is where Sitecore’s integrated analytics and personalization engine truly shines. You get to define what success looks like by assigning specific goals to your experiment.

These goals go way beyond simple clicks. You can track meaningful business outcomes that actually matter, such as:

- Downloads of a key whitepaper

- Form submissions for a product demo

- Adds to cart on an e-commerce page

- Revenue generated per visit

Tying tests directly to business KPIs is how you prove ROI. After setting your goals, you decide how to split your traffic. You can manually assign a percentage of visitors to each variation, making sure each one gets enough eyeballs to produce statistically significant results.

With your variations, goals, and traffic split all configured, launching the test is just a matter of a few clicks. Sitecore takes it from there, automatically serving the different page versions to visitors and collecting performance data in the background.

Leveraging Sitecore AI for Automated Optimization

While a standard A/B/n test in Sitecore is already powerful, adding Sitecore AI takes your optimization game to a whole new level. Instead of manually splitting traffic and waiting for a winner, you can switch on Sitecore's Auto-Personalization feature. This lets machine learning algorithms do the heavy lifting by automatically spotting high-value audience segments and showing them the best-performing experience.

Sitecore AI can dynamically shift traffic toward the winning variation in real-time. This minimizes potential lost conversions during the testing period and accelerates the journey to an optimized experience, effectively combining testing and personalization into a single, automated workflow.

The dashboard gives marketers a clear, immediate view of how each variation is performing against key goals. For teams looking to build a more comprehensive framework around these powerful features, our guide on creating an automated marketing strategy offers a deeper dive.

Applying Experimentation Principles to SharePoint

For organizations that use SharePoint as a primary intranet or collaboration hub, the principles of A/B/n testing are just as relevant. While SharePoint doesn't have the same native testing tools as Sitecore, you can still foster a culture of experimentation.

There are two main ways to approach this:

- Custom Development: You can use the SharePoint Framework (SPFx) to build custom web parts that serve different content variations to different user groups. This path gives you maximum control but does require development resources.

- Third-Party Integration: Another option is to integrate dedicated testing and personalization tools that are compatible with SharePoint. This aligns perfectly with a composable DXP architecture, where you plug in best-in-class tools for specific jobs.

This composable approach is a huge advantage for modern enterprises. It allows you to use the powerful native capabilities of a platform like Sitecore for your public websites while retaining the flexibility to integrate other specialized tools into your wider digital ecosystem, including SharePoint. This helps future-proof your optimization strategy, ensuring you can test and improve every single digital touchpoint.

An a b n testing campaign doesn't end when you find a winner—that’s where the real work begins. The goal isn't just to pick a better version of a page, but to build a smarter, more agile organization. Once a test wraps up, your first move should be to dig deeper than the surface-level result and segment your findings.

How did each variation perform for different user groups? Maybe new visitors loved Variation B, but returning customers engaged more with Variation C. What about performance across traffic sources, like organic search versus paid campaigns? This level of analysis turns a simple win-or-lose outcome into a goldmine of strategic insight.

Deep Diving with Sitecore AI

This is where the power of an integrated DXP like Sitecore becomes crystal clear. Manually slicing data for dozens of segments is tedious and error-prone. Sitecore AI, on the other hand, automates a huge chunk of this deep analysis. Its machine learning models can sift through test results and find high-performing segments you might not have even thought to look for.

For instance, the AI might discover that a specific page layout dramatically boosts conversions for mobile users from a particular geographic region. That insight goes far beyond "Variation C won." It gives you a concrete, actionable opportunity for personalization, turning your testing program from a blunt instrument into a precision tool for understanding what your customers really want.

The true value of A/B/n testing in Sitecore isn’t just finding a single winner. It's about using the platform’s AI capabilities to uncover pockets of opportunity, revealing exactly why certain experiences resonate with specific audiences and using that knowledge to build a smarter personalization strategy.

From One-Off Tests to a Compounding Growth Engine

To build a true culture of optimization, you can't let valuable insights get lost or forgotten. Every test, whether it produced a clear winner or an inconclusive result, is a learning opportunity. The key is to document these learnings in a centralized, accessible knowledge base. For many teams, a dedicated SharePoint site works perfectly for this.

This knowledge base should detail:

- The Original Hypothesis: What problem were you trying to solve?

- Test Variations: What changes did you test?

- Key Metrics: What were the primary and secondary KPIs?

- Results & Analysis: What were the outcomes, including segment-specific performance?

- Next Steps: How will these learnings inform future tests or site changes?

Documenting this information stops your team from solving the same problems over and over. It creates an institutional memory that turns isolated tests into a compounding engine for growth. To build a robust and impactful experimentation program, it's essential to understand a modern conversion optimization strategy.

This disciplined approach is becoming the new standard. We're seeing a major trend away from fragmented tests and toward continuous, AI-orchestrated optimization across all digital channels. This "always-on intelligence," supported by standardized processes known as ExperimentOps, compounds learnings and is critical for enterprise teams managing global digital presences. By adopting this mindset, every decision becomes a chance to learn, improve, and build a more intelligent digital experience.

Even the most buttoned-up A/B/n testing programs, whether running on Sitecore AI or a custom SharePoint setup, can get thrown off course by a few common mistakes. Experience teaches you where the landmines are hidden. Steering clear of them is the key to turning your optimization efforts into real business value, not just a pile of inconclusive data and wasted time.

One of the easiest traps to fall into is chasing the local maximum. This is what happens when teams get bogged down optimizing tiny elements, like endlessly tweaking button colors or fussing over minor headline changes. Sure, these tests might produce small wins, but they often keep you from finding the game-changing breakthroughs.

A high-impact testing program moves beyond fiddling with the small stuff. Instead of testing five shades of blue for a call-to-action button, a powerful test in Sitecore would pit a standard product page against a dynamic, AI-personalized version and a third, video-centric layout.

Misreading the Data and Calling It Quits Too Early

Another critical error is running tests on low-traffic pages or ending them the second a variation pulls ahead. An A/B/n test splits your audience across several versions, which means each one gets less traffic than in a simple A/B test. Calling it early often leads to false positives because you haven't reached statistical significance.

Your results need to be solid, not just random noise. This is especially true on complex platforms like Sitecore, where multiple personalization rules or campaigns might be running at once. Always calculate your required sample size before you launch, and let the test run its full course to collect reliable data.

Likewise, don't forget to account for outside influences that can contaminate your results. Pay attention to things like concurrent marketing campaigns, seasonal sales, or major industry news that could skew visitor behavior. Isolating the test's impact is everything.

Common Gaffes in Sitecore and SharePoint

- Ignoring Segmentation: A "winning" variation might not be the winner for everyone. If you don't analyze how different segments performed—like new vs. returning visitors or mobile vs. desktop users—you're leaving huge personalization opportunities on the table.

- Technical Glitches: A slow-loading variation or a single broken script can completely invalidate your test. Always run a thorough QA on every variation across different browsers and devices before launch to make sure it's a fair fight.

- Forgetting the "Why": Your test will tell you what happened, but it won't always explain why. Back up your quantitative A/B/n data with qualitative feedback, like user surveys or session recordings, to get inside your users' heads and understand the motivation behind the numbers.

Frequently Asked Questions About A/B/n Testing

When enterprise teams start using A/B/n testing, practical questions always come up. This FAQ gives you direct, actionable answers that build on the key ideas in this guide, especially for those working in a Sitecore or other DXP environment.

How Much Traffic Do I Need for A/B/n Testing?

The traffic you need depends on your current conversion rate and how much of an improvement you expect to see. Because a b n testing splits your audience into three or more groups, it naturally demands more traffic than a standard A/B test to get statistically significant results in a reasonable time.

A good rule of thumb is to use an online sample size calculator before you even think about launching an experiment. Pages with thousands of monthly conversions are always the strongest candidates. If you're working with lower-traffic pages, you'll either need to let the test run for a much longer time or focus on testing bigger, more dramatic changes that are easier to detect.

For anyone using Sitecore, this means you should focus your first A/B/n tests on high-value pages. Think landing pages, major product categories, or the steps in your checkout funnel. Put your effort where the data is rich enough to give you insights you can trust.

What Is the Main Difference Between A/B/n and Multivariate Testing?

The real difference is all about what you're comparing. In A/B/n testing, you're pitting multiple, distinct versions of a page against one another. You might test three completely different homepage layouts, for instance. The goal is simple: find out which overall version wins.

Multivariate Testing (MVT), on the other hand, tests different combinations of elements on a single page. You might test two headlines and three images, which creates six unique combinations for users to see. MVT tells you which specific combination of elements works best together.

Think of it this way: A/B/n testing is for validating big, strategic ideas. MVT is for fine-tuning pages that already get a lot of traffic.

Can A/B/n Testing Hurt My SEO?

No—as long as you do it correctly. Search engines actually encourage you to run tests to improve the user experience. The key is to follow best practices so you don’t fall into common traps that can hurt your rankings.

Make sure you follow these critical guidelines:

- Use

rel="canonical": Every variation page needs this tag, and it must point back to the original (control) URL. This is how you tell search engines which page is the official version. - Avoid Cloaking: Never show the search engine bot user-agent different content than what a human visitor sees. That’s a fast track to getting penalized.

- Implement Winning Changes: As soon as a test is over, update the original page with the winning design. Then, get rid of all the testing scripts and alternate pages promptly.

Modern DXPs like Sitecore handle many of these technical SEO details for you, which makes running experiments a lot safer and easier.

Ready to move beyond guesswork and start making data-driven decisions? Kogifi provides expert implementation and support for Sitecore AI and SharePoint, empowering your team to build a culture of continuous optimization. Discover our DXP solutions.